While Augmented Reality (AR) and Virtual Reality (VR) are envisioned as the next iteration of the internet immersing us in new digital worlds, the associated headset hardware and virtual keyboard interfaces create new opportunities for hackers.

Such are the findings of computer scientists at the University of California, Riverside, which are detailed in two papers to be presented this week at the annual Usenix Security Symposium in Anaheim, a leading international conference on cyber security.

The emerging metaverse technology, now under intensive development by Facebook’s Mark Zuckerberg and other tech titans, relies on headsets that interpret our bodily motions — reaches, nods, steps, and blinks — to navigate new worlds of AR and VR to play games, socialize, meet co-workers, and perhaps shop or conduct other forms of business.

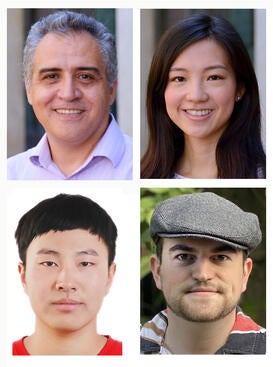

A computer science team at UCR’s Bourns College of Engineering led by professors Jiasi Chen and Nael Abu-Ghazaleh, however, has demonstrated that spyware can watch and record our every motion and then use artificial intelligence to translate those movements into words with 90 percent or better accuracy.

“Basically, we show that if you run multiple applications, and one of them is malicious, it can spy on the other applications,” Abu-Ghazaleh said. “It can spy on the environment around you, for example showing people are around you and how far they are. And it can also expose to the attacker your interactions with the headset.”

For instance, if you take a break from a virtual game to check your Facebook messages by air typing the password on a virtual keyboard generated by the headset, the spyware could capture your password. Similarly, spies could potentially interpret your body movements to gain access to your actions during a virtual meeting in which confidential information is disclosed and discussed.

The two papers to be presented at the cybersecurity conference are co-authored Abu-Ghazaleh and Chen toether with Yicheng Zhang, a UCR computer science doctoral student, and Carter Slocum, a visiting Assistant Professor at Harvey Mudd College who earned his docorate at UCR.

The first paper is titled “It's all in your head(set): Side-channel attacks on AR/VR systems.” With Zhang as the lead author, it details how hackers can recover a victim’s hand gestures, voice commands, and keystrokes on a virtual keyboard, with accuracy exceeding 90 percent. The paper further shows how spies can identify applications as they are launched and perceives other people standing near the headset user with a distance accuracy of about 4 inches (10.3 cm).

The second paper, “Going through the motions: AR/VR keylogging from user head motions,” digs deeper into the security risk of using a virtual keyboard. With Slocum as lead author, it shows how subtle head movements made by users typing on virtual keyboards are sufficient for spies to infer the text that is being typed. The researchers then developed a system, dubbed TyPose, that uses machine learning to extract these head motion signals to automatically infer words or characters that a user is typing.

Both papers are expected to inform the tech industry of their cyber security weaknesses.

“We demonstrate feasibilities of attacks, and then we do responsible disclosure,” Abu-Ghazaleh said. “We tell the companies that, hey, this is what we were able to do. And then we give them time to see if they want to fix it before we publish our findings.”